postedxposted 2026-04-09

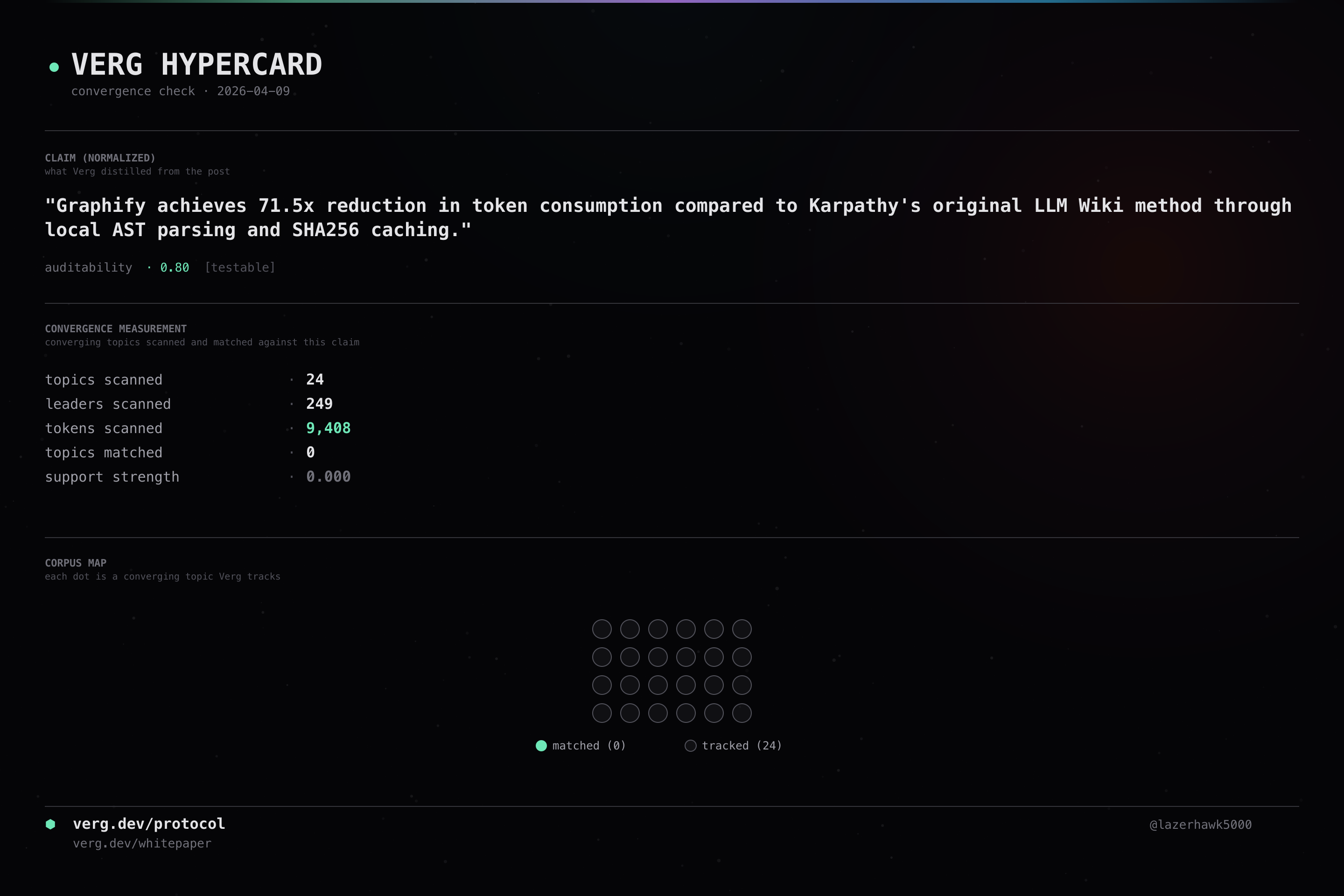

Graphify achieves 71.5x reduction in token consumption compared to Karpathy's original LLM Wiki method through local AST parsing and SHA256 caching.

claim by @Connected_Data · source

audit_id: 8cd8060e849c240c

This is a public audit record produced by Verg’s signal/noise probe. See the protocol page for methodology, and all audits for the feed.